How Meta Modernized WebRTC: A Step-by-Step Guide to Escaping the Forking Trap

Introduction

Real-time communication (RTC) powers billions of interactions across Meta's platforms—from Messenger and Instagram video chats to low-latency cloud gaming and VR casting on Meta Quest. For years, Meta relied on a deeply forked version of the open-source WebRTC library to deliver the performance and custom features needed for these diverse use cases. However, maintaining a permanent fork of a large open-source project like WebRTC creates a dangerous trap: the internal version drifts further from the upstream community with each new feature and bug fix, eventually making it prohibitively expensive to merge upstream improvements. This guide explains how Meta broke free from this “forking trap” by migrating over 50 use cases to a modular, dual-stack architecture built on top of pristine upstream WebRTC. We’ll walk you through the exact steps Meta’s engineering team followed—from designing a safe A/B testing framework to solving the static linking puzzle—so you can apply these lessons to your own large-scale projects.

What You Need

- A large monorepo containing your application source code and multiple versions of WebRTC (or any other actively maintained open-source library you’ve forked).

- Deep familiarity with C++ and the One Definition Rule (ODR) – static linking two versions of the same library is a core challenge.

- Experience with continuous integration (CI) and A/B testing frameworks to safely roll out changes to billions of users.

- A team of engineers dedicated to migrating features one by one, and a clear timeline for each migration wave.

- Automated tooling to detect symbol collisions, manage patches, and verify binary size/performance regressions.

Step 1: Assess the Forking Trap and Define Success

Before any migration, Meta’s team quantified the cost of their fork. They identified all 50+ use cases (audio/video calls, screen sharing, cloud gaming, VR casting, etc.) that depended on the forked WebRTC. They also measured how far behind upstream they had fallen—in terms of missing security patches, performance improvements, and new features. A clear success metric was defined: every use case must run on pristine upstream WebRTC without regressions, while preserving all custom optimizations (like specialized video codecs or network transport layers). This step is crucial: you need a baseline to compare against and a clear “done” state for the migration.

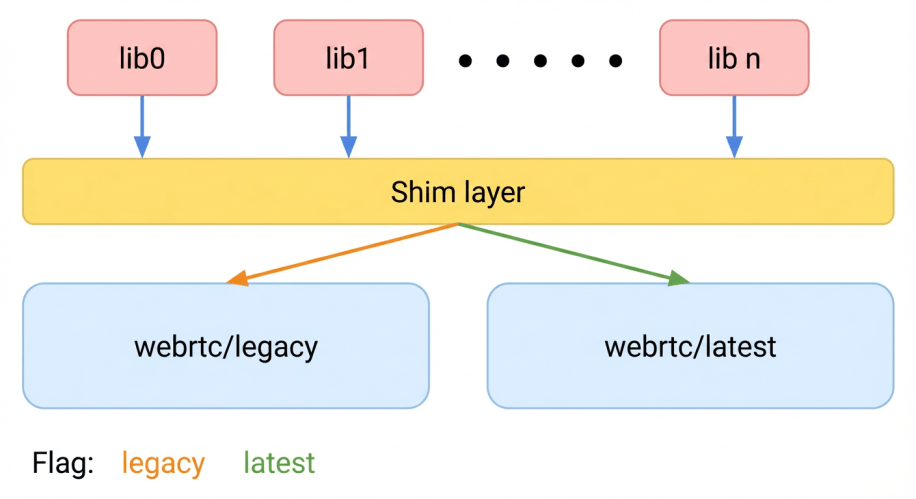

Step 2: Design a Dual‑Stack Architecture for Safe A/B Testing

Meta’s key insight was that a one-time upgrade was too risky. Instead, they built a dual‑stack system where both the legacy forked version and the new upstream-based version could run simultaneously inside the same app. This allowed them to route individual users to either stack using A/B testing infrastructure. The architecture had to satisfy two constraints:

- Dynamic switching – a user session could be assigned to the legacy or new stack without affecting other users.

- No runtime conflicts – both versions had to coexist without clashing over shared resources.

The team designed a thin abstraction layer that mapped each WebRTC API call to either the legacy or new implementation. This layer also included a “feature gate” that controlled which version each user saw, enabling gradual rollout and rollback.

Step 3: Solve the Static Linking Problem (ODR Violations)

The biggest technical challenge was statically linking two versions of WebRTC into the same binary. C++’s ODR forbids having multiple definitions of the same symbol—which is exactly what would happen with two copies of the library. Meta’s solution was symbol renaming and linker namespaces. They used custom build tooling to rename every global symbol in the new upstream version (e.g., prefixing all functions with upstream_). This prevented collisions at link time. Additionally, they used linker version scripts to hide internal symbols from the legacy version. The result: both libraries could coexist in the same address space without any symbol conflicts. This step alone required months of careful engineering and testing to ensure no symbol was missed.

Step 4: Implement a Continuous Upgrade Workflow

With the dual-stack architecture in place, Meta needed a repeatable process to regularly pull in new upstream WebRTC releases and test them against all 50+ use cases. They built a CI pipeline that:

- Pulls the latest upstream WebRTC commit and applies Meta’s proprietary patches on top.

- Builds both the legacy and new stacks side by side.

- Runs a comprehensive suite of unit, integration, and end-to-end tests covering every use case.

- Automatically flags regressions in performance, binary size, or correctness.

- Enables A/B testing of the upgraded stack in production, starting at 1% of users and gradually increasing to 100% if no issues are found.

This workflow ensured that Meta never fell behind again: they could upgrade with confidence every few weeks, not years.

Step 5: Migrate Use Cases One by One

Instead of a big-bang migration, Meta moved each of the 50+ use cases individually. For each use case, engineers:

- Removed all code that directly tied it to the forked WebRTC internals.

- Replaced that dependency with the new abstraction layer, pointing to the upstream stack.

- Ran A/B tests in production, verifying that metrics (audio/video quality, latency, crash rate) did not regress.

- Once confirmed, disabled the legacy stack for that use case permanently.

This incremental approach minimized risk and allowed the team to learn from each migration. The order was strategic: easier, less-critical use cases (e.g., simple audio calls) were migrated first to build confidence; complex ones (e.g., cloud gaming) came later. Over the course of several years, all 50+ use cases were successfully migrated.

Step 6: Measure and Communicate Improvements

Throughout the migration, Meta tracked three key performance indicators:

- Binary size – thanks to the ability to strip out unused code paths, the new stack was leaner.

- Performance – upstream optimizations (like improved jitter buffers) yielded lower latency and higher call quality.

- Security – each upstream release brought the latest security patches, removing the fear of delayed fixes in the fork.

Regular internal blog posts and presentations celebrated wins and taught other teams how to avoid the forking trap.

Tips for Your Own Migration

- Start with a clear inventory of all dependencies on your fork. You can’t migrate what you don’t know.

- Invest heavily in testing infrastructure before you begin the migration. A broken CI pipeline will stall progress.

- Automate symbol renaming as much as possible. Doing it manually is error-prone and will lead to mysterious linker errors.

- Use feature flags to enable A/B testing from day one. It’s the only safe way to roll out changes to billions of users.

- Plan for the long haul. Migrating 50 use cases took years. Be patient and keep the team motivated by celebrating small wins.

- Never let your new stack diverge. After migration, enforce a policy that every upstream upgrade must be merged within one sprint. Automate this with your CI pipeline.

By following these steps, Meta not only escaped the forking trap but also built a sustainable model for staying current with WebRTC’s evolution—while still preserving the custom features that power their unique real-time communication experiences.

Related Articles

- Supply Chain Attack on Elementary Data: How a GitHub Actions Flaw Led to Malicious PyPI Package

- Sovereign Tech Agency Unveils Paid Pilot to Boost Open Source Maintainer Participation in Internet Standards

- GitHub's Roadmap to Reliability: Addressing Availability and Scaling for the Future

- Swift Community Update: April 2026 Highlights – Valkey Swift Client and More

- Inside the Lens: Documenting Open Source Heroes

- OpenClaw: The Rise of Persistent AI Agents and What It Means for Enterprise Security

- Documenting the Unsung Heroes of Open Source: A Conversation with Cult.Repo Producers

- Swift Community Surges at FOSDEM 2026: New Tools and Packages Unveiled