How Spotify Wrapped 2025 Uncovers Your Year's Listening Story: A Technical Guide

Overview

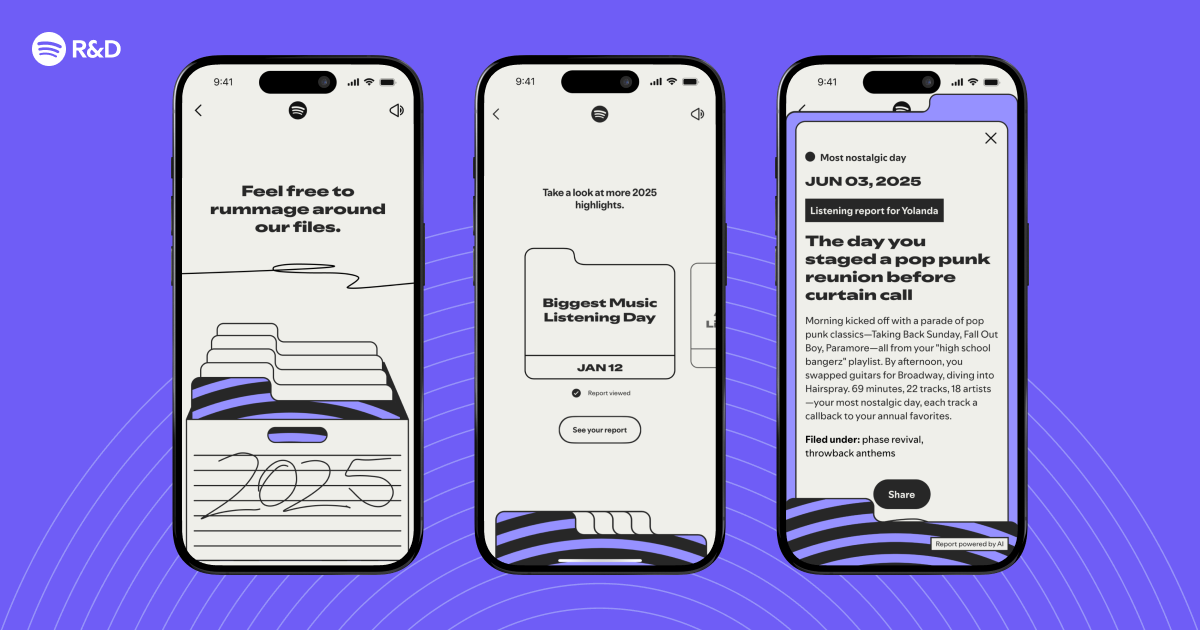

Every December, Spotify Wrapped transforms millions of listeners' raw streaming data into a personalized year-in-review story. The 2025 edition isn't just a collection of top artists and genres—it identifies interesting listening moments and weaves them into a narrative that captures your musical journey. This tutorial walks through the engineering and machine learning pipeline behind that magic, from ingesting petabytes of logs to generating a coherent story. You'll learn how Spotify engineers detect anomalies, cluster listening patterns, and craft a narrative that feels uniquely yours. By the end, you'll have a blueprint for building your own 'year in review' system.

Prerequisites

- Data Engineering: Familiarity with big data tools (Apache Spark, Google BigQuery) and streaming architectures (Kafka, Pub/Sub).

- Programming: Python (pandas, numpy) and SQL for data manipulation.

- Machine Learning Basics: Knowledge of clustering (K-Means, DBSCAN), anomaly detection (Isolation Forest), and time-series analysis.

- Natural Language Processing (NLP): Experience with template-based NLG or simple language models (e.g., GPT-based summarization).

- Software Engineering: Understanding of API design and A/B testing frameworks.

Step-by-Step Instructions

Step 1: Data Ingestion and Preprocessing

Spotify collects streaming events (play, skip, like, share) in real time. For Wrapped, we extract events from the 2025 calendar year. Each event includes user ID, track ID, timestamp, device type, and listen context (playlist, radio, search). Preprocessing steps:

- Partition by user and month: Use Spark to repartition data to avoid skewed joins.

- Filter out noise: Remove events less than 10 seconds (likely accidental plays).

- Feature engineering: For each user, compute daily/weekly listening volumes, genre diversity, skip rates, and repeat listening ratios.

- Normalize timestamps: Convert to UTC and flag time zones for local time analysis (e.g., late-night listening).

# Example: PySpark job to aggregate streaming events per user per day

events_df = spark.read.parquet("spotify_streams_2025")

events_clean = events_df.filter(events.duration > 10)

daily_agg = events_clean.groupBy("user_id", "event_date").agg(

count("*").alias("listens"),

countDistinct("track_id").alias("unique_tracks"),

(sum("skip_flag") / count("*")).alias("skip_rate"))

Step 2: Defining 'Interesting Listening Moments'

Interesting moments are statistically unusual or emotionally resonant patterns. We use three techniques:

- Anomaly Detection: Detect spikes in listening (e.g., 200% more listens in one week) using the Isolation Forest on weekly listen count. Flag as 'binge moments'.

- Change Point Detection: Identify shifts in genre preference (e.g., switching from pop to classical) using binary segmentation on daily genre percentages.

- Temporal Clustering: Cluster users' hourly patterns to find signature listening times (e.g., morning commute vs. wind-down). Use K-Means on hourly histogram (24 features).

# Python example: change point detection using ruptures library

import ruptures as rpt

# user_genre_series = list of genre fractions over time

model = rpt.Pelt(model="l2").fit(user_genre_series)

change_points = model.predict(pen=10)

# These points indicate when a genre shift occurred

Step 3: Personalization Algorithm

Each user's story is unique. We score every potential 'moment' using a multi-factor formula:

Score = (Novelty × Emotional Impact × Replay Value) / Baseline

- Novelty: Inverse frequency of similar moments across all users (rarity).

- Emotional Impact: Derived from track valence (via audio features) and user interaction (likes, shares).

- Replay Value: How often the user returned to that track/playlist after the moment.

- Baseline: User's average listening intensity to avoid overrepresenting heavy listeners.

We then rank moments for each user and select the top 3-5.

# Scoring example

novelty = 1 / (global_moment_freq + 1)

emotional = user_valence_surge * 2 if user_liked else 0.5

score = (novelty * emotional * replay_count) / user_baseline

Step 4: Narrative Generation

With moments selected, we generate a story. We use template-based NLG with slots filled by dynamic data (e.g., artist names, dates, adjectives). Categories include:

- Breakthrough Artist: First discovered in 2025 and most played.

- Binge Session: Period of intense listening to one playlist.

- Genre Exploration: A shift to a new genre.

- Throwback Track: An older song revived.

Example template: "In , you went on a binge, listening times in days."

We also add contextual humor (e.g., "You listened to sad songs at 2 AM. Everything okay?") based on time clusters. The generated text is then localised into 50+ languages.

# Simple template filler

moment = {'artist':'Taylor Swift','month':'March','count':42,'days':5}

template = "In {month}, you went on a {artist} binge, listening {count} times in {days} days."

story = template.format(**moment)

Common Mistakes

- Data bias toward heavy users: Without normalization, the top 1% of listeners dominate. Always compare against user baseline.

- Ignoring stream quality: Include offline and cached plays; they may lack timestamps. Use heuristic deduplication.

- Overcomplicating narrative: Too many variables make stories nonsensical. Stick to 3-5 clear categories.

- Forgetting GDPR/CCPA: User data must be anonymized. Use differential privacy when computing global frequencies.

- A/B testing pitfalls: Rolling out Wrapped to all users simultaneously can cause server overload. Test with 5% first.

Summary

Building a 'year in review' system like Spotify Wrapped 2025 requires a pipeline that ingests massive streaming data, detects interesting listening anomalies, personalizes highlights with a scoring algorithm, and generates a narrative that feels both accurate and delightful. By following these four steps—data preprocessing, moment detection, personalization, and NLG—you can create a scalable system that tells every user their own unique story while avoiding common biases and data pitfalls.

Related Articles

- Crafting Your 2025 Wrapped: A Step-by-Step Guide to the Engineering Behind the Highlights

- 10 Essential Strategies for Designing Stable Streaming Interfaces

- 10 Key Insights on Apple’s Ambitions for F1: From Movie Sequels to Streaming Dominance

- How to Score This Week's Best Apple Deals: Apple Watch Series 11, M5 MacBook Air, and AirPods

- This Week's Top Apple Bargains: M5 MacBook Air, Apple Watch Series 11, and AirPods Sale

- Armed Heist of Apple Delivery Truck Yields $1.2 Million in Stolen Goods; Three Suspects Charged

- From Raw Streams to Personal Stories: Building Spotify Wrapped Highlight Detection

- Chainsaw Man: Rez Arc and Pixar's Hoppers Headline This Weekend's Streaming Releases